ASTANA – As concerns over how social media affects younger users intensify globally, Meta has been placing a significant emphasis on Teen Accounts, a set of features designed to limit exposure to harmful content and increase parental oversight. In an interview with The Astana Times, Sarim Aziz, Meta’s Director of Public Policy for South and Central Asia, discussed how those safeguards are being rolled out across the region and shed light on Meta’s engagement with Kazakhstan so far.

“Keeping young people safe online is a priority for Meta, and as a father of two teenagers, I know first-hand how important it is for parents to feel confident their children are protected. That’s the thinking behind Teen Accounts,” Sarim Aziz told The Astana Times.

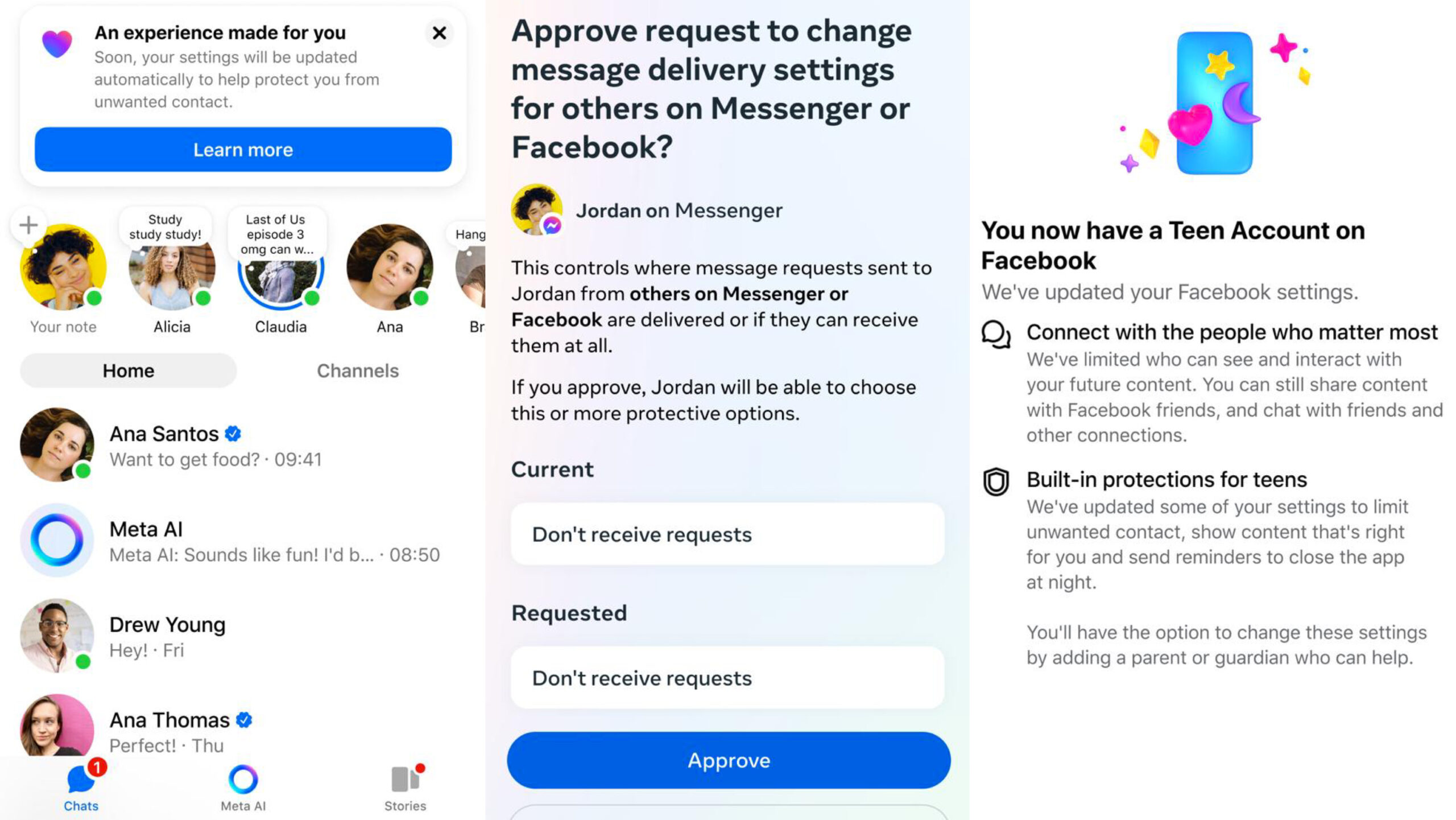

Originally launched on Instagram in September 2024, Teen Accounts have been later expanded to Facebook and Messenger. The basic idea behind it, as Aziz describes, is to offer a “dedicated experience for teens with built‑in safeguards enabled by default, so teens are placed into more protective settings—like private accounts, stricter content controls, and limits on who can message them.”

How does Teen Accounts work?

When it comes to teens being online, there are many concerns parents may have, ranging from who they are in contact with, what kind of content they are exposed to, and whether it is age-appropriate, and screen time.

“Let me explain in greater detail how teen accounts work. If you are 13-17 years old and are creating either a new account or have an existing account, you will directly be enrolled into the Teen account experience – this means you will have a private account, have the strictest messaging restrictions, which means that if you do not follow someone or they do not follow you, then you cannot be contacted,” said Aziz.

The hidden words feature, which filters out potentially harmful content, is enabled by default. Meta also tailors the experience by age.

“If you are 13-15 years old, you may need more guidance and may be at a different stage developmentally, compared to an older teen who may be more independent and want more autonomy. In practice, in Teen Account experience, this means 13–15-year-olds cannot change the strictest settings themselves; they can only change them with a parent or guardian’s help,” he explained.

Feedback from parents and guardians globally

When asked what kind of feedback Meta has been receiving from parents, including in Kazakhstan, Aziz said parents in Kazakhstan and other countries have responded positively, describing the changes as meeting their expectations.

“In Kazakhstan, we partnered with the Committee for the Protection of Children and Maksut Narikbaev University to launch this last June, and the response has been encouraging,” he added.

“97% of teens aged 13–15 have stayed in these built-in restrictions – this is a win for both parents and teens because parents do not have to worry about their teens having a safe online experience and teens have assurance of the same. This is great progress. We also want to keep listening to evolving feedback from parents and teens and make improvements when needed,” he said.

Global discussions on social media use under 16

The debate over social media use among children under 16 has been mounting globally, with Australia being the first country to impose a ban. The restrictions block access to platforms including TikTok, YouTube, Instagram and Facebook.

“We don’t believe blanket social media bans are the most effective way to keep teens safe. At the same time, we believe policy solutions should work across the full digital ecosystem. Governments considering bans should be careful not to push teens toward less safe, unregulated sites, or logged out experiences that bypass important protections, like the default safeguards we offer in Teen Accounts,” said Aziz.

A more effective mechanism, he noted, would combine built-in protections and age verification at the app-store level.

“A core part of our work is building age-appropriate experiences by default. This is also a widely shared position across the industry, reflected in commitments to design services with children’s best interests and safety in mind. It is consistent with the UN Convention on the Rights of the Child, which recognizes children’s rights to protection and to access information, and supports approaches that enable safe, age-appropriate participation online,” he explained.

Rather than relying on individual apps, he said age verification should happen at the operating system or app-store level. A shared, privacy-protected signal would allow parents to manage downloads while enabling platforms to enforce age-appropriate protections more consistently.

“The most effective approaches combine platform responsibility with ecosystem-wide standards. Governments can require apps serving teens to offer parental supervision tools by default, and age verification works best at the app-store/OS level—where app stores have already built systems for parental notification, review, and approval, and already require parental approval for the purchase of any apps and for in-app purchases. Kazakhstan is well-positioned to lead on this in Central Asia,” he explained.

According to Aziz, teens use many different apps, so having the same rules everywhere works better. He added that checking age through app stores or phone systems would mean apps collect less data and teens wouldn’t need to share IDs with each one.

Enhancing youth safety further

When asked what further measures Meta plans to take to improve youth safety, Aziz said the goal is to give teens a safer online experience while making it easier for parents to set boundaries. One change includes updating Instagram teen accounts so that, by default, content is limited to what one might see in a 13+ movie. At the same time, parents who want tighter control can apply stricter settings.

“This is the most significant update to Teen Accounts yet, and is designed to give parents even greater peace of mind that when their teen is on Instagram, they’re seeing content that’s appropriate for their age by default. We look forward to rolling this out in Kazakhstan soon,” he added.

The company is also adding stronger protections for accounts run by adults that feature children. These accounts will have stricter message controls and automatic filters for offensive comments. Users will be notified and asked to review their privacy settings.

“In addition to these new protections, we’re also continuing to take aggressive action on accounts that break our rules. Earlier this year, our specialist teams removed nearly 135,000 Instagram accounts for leaving sexualized comments or requesting sexual images from adult-managed accounts featuring children under 13. We also removed an additional 500,000 Facebook and Instagram accounts that were linked to those original accounts,” said Aziz.

“People who seek to exploit children don’t limit themselves to any one platform, which is why we also shared information about these accounts with other tech companies through the Tech Coalition’s Lantern program,” he added.

The company says it requires users to provide their age, allows underage accounts to be reported and flagged, and uses artificial intelligence to distinguish between teens and adults. In some cases, it also asks users to verify their age using privacy-focused tools, particularly when account details are changed.

Aziz also pointed to the introduction of parent-managed accounts on WhatsApp.

“Built in response to feedback from parents who want an experience tailored for under-13s, these accounts must be created and actively managed by a parent or guardian, remain linked to the parent’s own WhatsApp account, and are limited to calling and messaging only—while giving parents the ability to manage who can contact their pre-teen, the groups they can join, and key privacy settings,” he said.

Advice to youth on digital skills

While new tools aim to improve safety, they also highlight a broader issue: the need for stronger digital skills among young users.

“The advice I give young people is the same advice I give my own teenagers: be curious, be creative, and be intentional about how you spend your time online. Kazakhstan’s digital economy is growing fast, and young people here have real opportunities to build skills that matter globally,” Aziz said.

He said young people should start with the basics, including securing their accounts, thinking critically about online content and learning to use tools like AI for learning and creativity. That also involves using strong passwords, protecting personal information, managing who can contact them and making use of features such as blocking, reporting and content controls.

“As AI becomes more common, there are many different use cases—for example, it can help young people learn, communicate across languages, and express creativity by helping them brainstorm ideas, improve writing drafts, or practice a language through conversation. Increasingly, being both digitally native and AI-literate is becoming a baseline expectation in the job market, making these skills essential for young people’s future opportunities. It’s important that young people are equipped with digital literacy and citizenship skills, so they can use these tools thoughtfully and safely,” he explained.

He also pointed to resources available through Meta Family Center, which offers guides and tools to help parents talk to their children about online safety, screen time and emerging technologies such as AI.

Cooperation with Kazakhstan

Aziz spoke positively of the cooperation with Kazakhstan, saying Meta has been “deeply engaged in Kazakhstan across a range of priorities.”

“What’s been especially encouraging is that these efforts weren’t “one‑off” activities, they were built through sustained collaboration with government, local ecosystem partners, and civil society, with a strong emphasis on tangible outcomes,” he said.

“One example is the Meta Llama Accelerator, which we implemented together with the Ministry of AI and Digital Development, Astana Hub, and BAITC [Blockchain & AI Technology Center]. From July to October 2025, we ran the first initiative in Central Asia focused on introducing open language models like LLaMA and KazLLM into public‑sector contexts—both to create applied solutions and to help develop national expertise in large‑scale language models,” he explained.

He said the event drew over 1,000 participants and generated more than 250 ideas on digitalization and public services, backed by local case studies.

“For us, the key takeaway is that Kazakhstan has both the talent and the momentum to translate open innovation into practical public value,” Aziz added.

Meta has also focused on proactive safety efforts, including tackling fraud and scams, noting that such risks have direct consequences for families.

“Through the ‘Is This Legit?’ anti‑scams awareness campaign, which we cooperated on with the Ministry of Culture and Information, the Ministry of AI and Digital Development, the Ministry of Internal Affairs, and public fund Esbol Qory, the campaign reached over 5 million people and delivered 16 million+ impressions,” he said.

He added that an interactive game linked to the campaign was used by more than 13,000 people, who spent a combined 110,000 minutes learning about different types of scams, with particularly strong interest in romance and messaging scams. “The broader learning here is that combining public awareness, practical guidance, and engaging formats can meaningfully strengthen people’s resilience to evolving scam tactics,” he said.